In research, the concepts of internal and external validity are central to evaluating the quality and applicability of study findings. Internal validity refers to the extent to which a study accurately establishes a cause-and-effect relationship within its controlled environment, ensuring that observed outcomes are due to the manipulated variables and not external factors. External validity, on the other hand, focuses on how well those findings can be generalized to real-world settings, populations, or conditions beyond the study. Balancing these two forms of validity is a critical challenge for researchers. High internal validity often requires tightly controlled conditions, which may limit a study’s real-world relevance, while prioritizing external validity might introduce variables that cloud causal conclusions. This article explores the definitions, importance, and trade-offs of internal and external validity, offering insights into how researchers design studies to achieve reliable and broadly applicable results.

Internal Validity

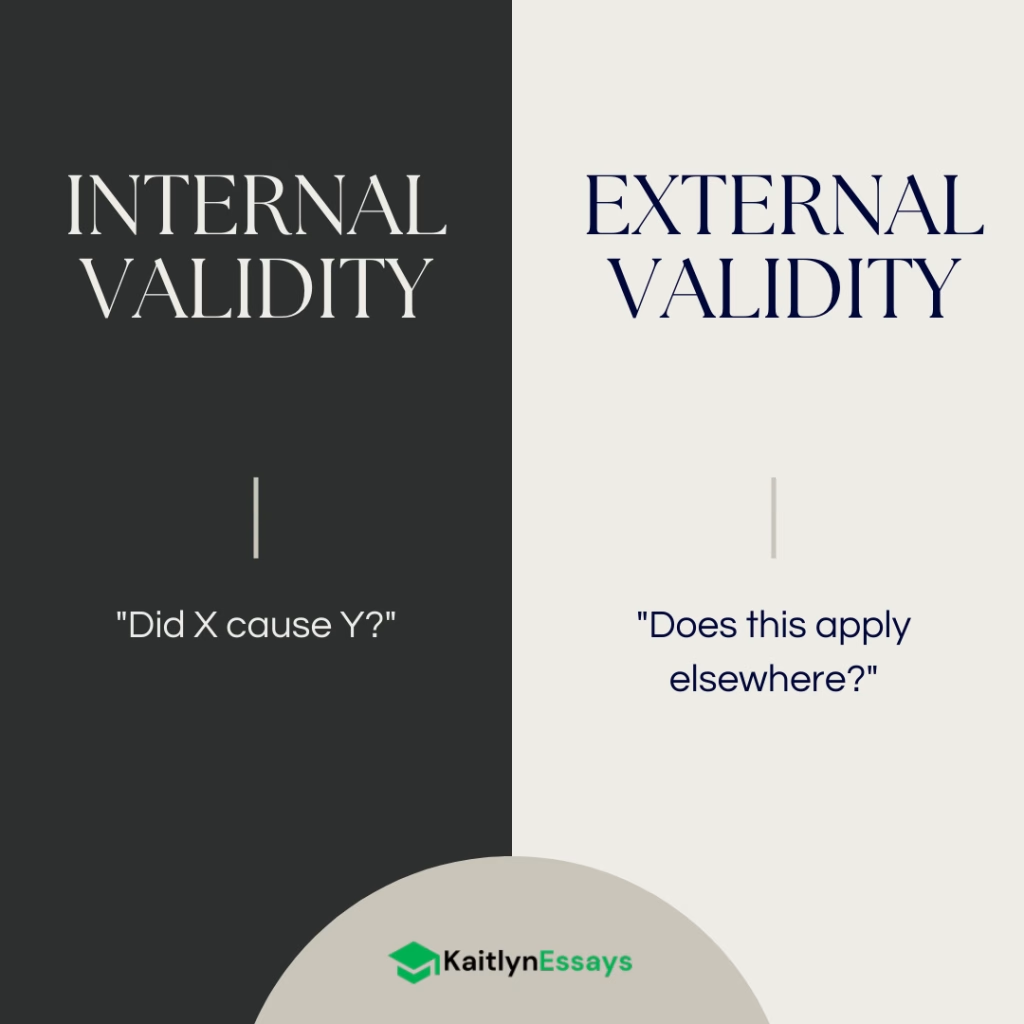

Internal validity represents the cornerstone of scientific research, determining whether we can confidently conclude that an observed relationship between variables is truly causal rather than coincidental or spurious. At its essence, internal validity asks the critical question: “Did the treatment, intervention, or independent variable actually cause the observed changes in the outcome, or could other factors be responsible?”

When a study has high internal validity, researchers can be confident that any differences observed between groups or changes measured over time are directly attributable to the manipulated variable, not to confounding factors or methodological flaws. This confidence forms the foundation upon which all scientific knowledge builds. Without internal validity, we cannot distinguish between genuine cause-and-effect relationships and mere associations that might be explained by hidden variables or research artifacts.

Consider a simple example: if researchers want to test whether a new teaching method improves student performance, internal validity would determine whether any observed improvements can be attributed to the teaching method itself, rather than to differences in student ability, teacher enthusiasm, time of day, or countless other potential explanations. The strength of internal validity directly corresponds to how confidently we can make this causal attribution.

Key Threats to Internal Validity

Understanding threats to internal validity is crucial for both designing robust studies and critically evaluating existing research. These threats represent alternative explanations for observed results that compete with the intended causal interpretation.

Selection bias and confounding variables pose perhaps the most significant threat to internal validity. Selection bias occurs when groups being compared differ systematically in ways beyond the treatment variable. For instance, if a study comparing two educational programs inadvertently assigns higher-achieving students to one program, any performance differences might reflect pre-existing abilities rather than program effectiveness. Confounding variables are unmeasured factors that influence both the treatment assignment and the outcome, creating spurious relationships. Age, socioeconomic status, motivation, and health conditions frequently serve as confounding variables in research studies.

History effects emerge when external events occur during the study period that could influence the outcome. In a longitudinal study examining the effects of a mental health intervention, major news events, policy changes, or seasonal variations might affect participants’ well-being independently of the treatment. These historical factors can masquerade as treatment effects, particularly in studies without appropriate control groups.

Maturation effects involve natural changes that occur in participants over time, regardless of any intervention. Children naturally develop cognitively and physically as they age, patients may recover from illnesses spontaneously, and individuals may become fatigued or more experienced through repeated testing. These natural progressions can be mistaken for treatment effects in studies lacking proper controls.

Instrumentation changes threaten internal validity when measurement tools, procedures, or criteria shift during the study period. This might involve equipment calibration drift, changes in survey questions, different raters applying varying standards, or modifications to data collection protocols. Such changes can create artificial differences that appear to be treatment effects.

Testing effects occur when the act of measurement itself influences subsequent responses. Participants may perform better on post-tests simply due to practice with the testing format, or they may alter their behavior because they know they’re being observed. Pre-testing can also sensitize participants to the treatment, making them more or less responsive than they would be naturally.

Attrition represents a significant threat when participants drop out of studies non-randomly. If certain types of participants are more likely to discontinue participation, the remaining sample may no longer be representative, and apparent treatment effects might reflect these compositional changes rather than genuine intervention impacts.

Regression to the mean affects studies that select participants based on extreme scores. Individuals with particularly high or low initial scores tend to score closer to the average on subsequent measurements due to natural variation, measurement error, and temporary factors that influenced their initial extreme scores. This statistical phenomenon can create illusory improvement or decline that has nothing to do with any intervention.

Placebo effects and experimenter bias introduce psychological threats to internal validity. Participants may improve simply because they believe they’re receiving beneficial treatment, while researchers’ expectations can unconsciously influence how they collect, analyze, or interpret data. These effects can create apparent treatment benefits that exist only in the minds of participants and researchers.

Methods to Enhance Internal Validity

Researchers have developed numerous strategies to minimize threats to internal validity and strengthen causal inferences. These methods represent the methodological toolkit for building confidence in research conclusions.

Random assignment stands as the gold standard for establishing internal validity in experimental research. By randomly assigning participants to treatment and control conditions, researchers ensure that groups are equivalent on average across all possible confounding variables, both measured and unmeasured. This equivalence means that any post-treatment differences between groups can be attributed to the treatment rather than to pre-existing group differences. Random assignment doesn’t guarantee perfect group equivalence in any single study, but it eliminates systematic bias and provides a statistical foundation for causal inference.

Control groups provide the crucial comparison needed to isolate treatment effects. Without control groups, researchers cannot determine whether observed changes represent genuine treatment effects or natural variations that would have occurred anyway. Control groups can take various forms: no-treatment controls receive no intervention, placebo controls receive inactive treatments that mimic the experimental condition, and active controls receive alternative treatments for comparison purposes.

Blinding procedures address threats from expectancy effects and bias. Single-blinding prevents participants from knowing their treatment assignment, reducing placebo effects and demand characteristics. Double-blinding additionally prevents researchers from knowing participants’ assignments, eliminating experimenter bias in data collection and analysis. Triple-blinding extends this protection to data analysts, though this level of blinding isn’t always feasible or necessary.

Standardized protocols ensure consistent treatment delivery and measurement across all participants and conditions. Detailed procedural manuals, training programs for research staff, and quality assurance measures minimize instrumentation threats and enhance the reliability of implementation. Standardization also facilitates replication by other researchers.

Pre-post designs with baseline measurements allow researchers to assess change over time while controlling for initial differences between participants. By measuring outcomes both before and after treatment, researchers can examine whether groups show different patterns of change, rather than simply different end-point values. This approach is particularly valuable when random assignment isn’t possible.

Matching techniques can enhance internal validity when random assignment isn’t feasible. Propensity score matching, nearest neighbor matching, and other statistical approaches attempt to create comparable groups by pairing participants with similar characteristics. While not as robust as random assignment, matching can reduce selection bias when implemented carefully.

Examples and Case Studies

Real-world examples illuminate how internal validity principles apply in practice and demonstrate both successful implementations and common failures.

Medical randomized controlled trials exemplify high internal validity in action. Consider a study testing a new blood pressure medication. Researchers randomly assign hypertensive patients to receive either the new drug or a placebo, with neither patients nor medical staff knowing who receives which treatment. Standardized protocols govern medication administration, blood pressure measurement procedures, and follow-up schedules. This design effectively controls for placebo effects, selection bias, measurement inconsistencies, and experimenter expectations, allowing confident attribution of any blood pressure differences to the medication itself.

Educational intervention studies often struggle with internal validity challenges. A well-designed example might involve randomly assigning classrooms to receive either a new mathematics curriculum or continue with the standard approach. Teachers receive equivalent training time and materials, students are assessed using standardized instruments, and researchers blind to condition assignment score the assessments. This design controls for teacher effects, student selection, and measurement bias.

Common internal validity failures provide instructive cautionary tales. A study claiming that a particular diet causes weight loss might compare people who chose to follow the diet with those who didn’t, ignoring that diet-choosers might be more motivated, health-conscious, or affluent. Without random assignment, apparent diet effects could reflect these pre-existing differences rather than the diet itself. Similarly, a program evaluation that measures participants only after treatment, without a control group, cannot distinguish genuine program effects from natural changes, historical events, or regression to the mean.

The pharmaceutical industry’s history with internal validity illustrates both successes and failures. Early drug testing often lacked proper controls, leading to incorrect conclusions about efficacy and safety. The thalidomide tragedy of the 1950s and 1960s, where inadequate testing failed to identify severe birth defects, highlighted the critical importance of rigorous experimental design. Modern pharmaceutical research now employs multiple phases of increasingly rigorous testing, with randomized controlled trials serving as the definitive standard for establishing drug efficacy and safety.

These examples demonstrate that internal validity isn’t merely an abstract methodological concept but a practical necessity for generating reliable knowledge that can guide decisions affecting human welfare. Strong internal validity provides the foundation upon which we can build confidence in causal claims and make informed choices about interventions, treatments, and policies.

External Validity

External validity represents the extent to which research findings can be generalized beyond the specific conditions, participants, and contexts of the original study. While internal validity asks whether a causal relationship truly exists within a study, external validity addresses whether that relationship holds true in the broader world where the research will ultimately be applied.

The fundamental question driving external validity concerns is: “Do these results apply beyond this specific study?” This question encompasses multiple layers of generalization—from the immediate study sample to larger populations, from controlled laboratory conditions to real-world settings, and from the specific time and place of data collection to other temporal and cultural contexts.

External validity serves as the bridge between scientific discovery and practical application. A study with perfect internal validity but poor external validity may demonstrate a clear causal relationship that has little relevance outside the laboratory. Conversely, findings with strong external validity provide confidence that interventions, treatments, or phenomena observed in research will manifest similarly when implemented in real-world conditions.

The importance of external validity becomes particularly evident when research informs policy decisions, clinical practice, or educational interventions. Policymakers need assurance that programs shown to be effective in pilot studies will produce similar benefits when scaled up across diverse populations and varied implementation contexts. Healthcare providers require confidence that treatment effects demonstrated in clinical trials will translate to their patient populations, who may differ significantly from trial participants in age, health status, socioeconomic background, or cultural factors.

Dimensions of External Validity

External validity operates across multiple dimensions, each presenting unique challenges and considerations for researchers seeking to maximize the generalizability of their findings.

Population validity concerns the extent to which findings generalize across different groups of people. This dimension addresses whether results obtained with one demographic group apply to others who differ in characteristics such as age, gender, ethnicity, socioeconomic status, education level, or cultural background. A study demonstrating the effectiveness of a cognitive training program among college students, for example, may not automatically generalize to older adults, children, or individuals with different educational backgrounds. Population validity requires careful consideration of sample composition and the degree to which participants represent the broader population of interest.

Ecological validity focuses on generalization across settings and contexts. Laboratory studies, while offering excellent control over extraneous variables, may create artificial environments that bear little resemblance to the natural contexts where behaviors typically occur. A study examining memory performance in a quiet, distraction-free laboratory setting may not predict how memory functions in noisy, complex real-world environments. Ecological validity extends beyond physical settings to include social contexts, organizational structures, and environmental conditions that might influence how phenomena manifest in practice.

Temporal validity addresses whether findings remain stable across different time periods. Research conducted during specific historical moments may capture phenomena that are influenced by contemporary events, social movements, technological advances, or cultural shifts. Studies of social media’s impact on adolescent behavior conducted in 2015 may not fully apply to today’s dramatically different digital landscape. Temporal validity also encompasses shorter-term considerations, such as whether effects observed immediately after an intervention persist over weeks, months, or years.

Treatment validity examines whether findings generalize across variations of interventions or treatments. Real-world implementations of research-based interventions rarely match the precise protocols used in controlled studies. Treatment validity considers whether the core causal mechanisms remain effective when interventions are adapted, simplified, or modified to fit practical constraints. A complex therapeutic intervention that requires extensive training and supervision may lose its effectiveness when implemented by practitioners with limited resources or different qualifications.

Factors That Limit External Validity

Several systematic factors can constrain the generalizability of research findings, often stemming from the very design choices that enhance internal validity.

Artificial laboratory settings represent one of the most common threats to external validity. Laboratory environments, by design, eliminate many of the complexities, distractions, and contextual factors present in natural settings. Participants in laboratory studies may behave differently than they would in their everyday environments, responding to demand characteristics or the novelty of the research situation. The sterile, controlled nature of laboratory conditions may activate different psychological processes or mask important moderating factors that operate in real-world contexts.

Narrow, unrepresentative samples severely limit population validity. Much psychological research relies heavily on samples that are Western, Educated, Industrialized, Rich, and Democratic (WEIRD populations), which represent a small fraction of human diversity. University student samples, while convenient and accessible, may not represent the broader adult population in crucial ways. Beyond demographic limitations, samples of convenience often exclude individuals who are most difficult to reach—those with severe mental illness, limited literacy, language barriers, or extreme socioeconomic circumstances—precisely the populations for whom research findings might be most crucial.

Specific historical or cultural contexts can create temporal and ecological validity limitations. Research conducted during periods of social upheaval, economic crisis, or technological transition may capture phenomena that are specific to those circumstances. Cultural assumptions embedded in research designs, measurement instruments, and theoretical frameworks may not translate across different cultural contexts. Concepts like individualism, authority relationships, or emotional expression vary significantly across cultures, potentially limiting the applicability of findings derived from culturally specific contexts.

Highly controlled conditions that enhance internal validity often reduce external validity by creating situations that diverge markedly from real-world complexity. Perfect compliance with treatment protocols in research studies rarely matches the variable adherence seen in practice. The intensive monitoring and support provided to research participants may not be available in routine implementation. Multiple competing demands, resource constraints, and organizational pressures present in real-world settings are typically absent from controlled research environments.

Strategies to Improve External Validity

Researchers can employ various strategies to enhance the generalizability of their findings while maintaining scientific rigor.

Diverse sampling methods represent the most direct approach to improving population validity. Purposive sampling strategies that intentionally include participants across relevant demographic dimensions can enhance generalizability. Community-based sampling that reaches beyond university populations, healthcare settings, or other institutional contexts can capture broader population diversity. Multi-site studies conducted across different geographic regions, healthcare systems, or organizational contexts can test whether findings replicate across varied implementation environments.

Field studies and naturalistic observations sacrifice some experimental control to gain ecological validity. Conducting research in natural settings—schools, workplaces, healthcare facilities, community centers—allows researchers to observe phenomena as they naturally occur within complex, dynamic environments. Field experiments that manipulate variables within real-world contexts can maintain some experimental control while preserving ecological realism. Naturalistic observation studies, while lacking the causal clarity of experiments, provide rich descriptions of how phenomena manifest in their natural contexts.

Replication across different populations and settings serves as perhaps the most robust strategy for establishing external validity. Systematic replication programs that test core findings across varied samples, contexts, and implementation approaches build cumulative evidence for generalizability. Cross-cultural replications reveal whether phenomena represent universal human tendencies or culture-specific patterns. Replication across different age groups, socioeconomic strata, or clinical populations tests the boundaries of generalizability.

Meta-analyses combining multiple studies provide powerful tools for assessing external validity by examining patterns of effects across diverse research contexts. Meta-analytic approaches can identify moderating factors that influence the magnitude or direction of effects across different populations or settings. By aggregating findings from multiple studies with varied methodological approaches, meta-analyses can reveal whether effects are robust across different operational definitions, measurement approaches, or implementation strategies.

Modern approaches to enhancing external validity increasingly emphasize adaptive and responsive research designs. Sequential multiple assignment randomized trials (SMARTs) test intervention sequences that mirror real-world decision-making processes. Implementation science frameworks explicitly focus on understanding how interventions work across diverse practice contexts. Community-based participatory research approaches involve stakeholders in research design and implementation, increasing the likelihood that studies address relevant questions in culturally appropriate ways.

The integration of big data and digital technologies offers new opportunities for enhancing external validity. Large-scale observational studies using digital traces, electronic health records, or administrative databases can test whether experimentally derived findings manifest at population scales. Real-world evidence studies can track intervention effects as they unfold in routine practice settings, providing crucial information about external validity that traditional controlled trials cannot capture.

The Tension Between Internal and External Validity

The Trade-off Dilemma

The relationship between internal and external validity represents one of the most fundamental tensions in research design. Design choices that strengthen one type of validity often weaken the other, creating a persistent dilemma for researchers who must navigate competing methodological priorities. This tension arises because the very controls that allow researchers to isolate causal relationships and eliminate confounding factors may simultaneously create artificial conditions that limit the generalizability of findings.

Laboratory control exemplifies this trade-off most clearly. Controlled laboratory environments excel at establishing internal validity by eliminating extraneous variables, standardizing procedures, and creating optimal conditions for detecting treatment effects. However, these same features can severely compromise external validity by creating artificial situations that bear little resemblance to the messy, complex environments where the phenomena naturally occur. A carefully controlled laboratory study of decision-making processes may reveal clear causal mechanisms under ideal conditions, but these mechanisms might operate differently when embedded within the time pressures, competing demands, and social influences present in real-world decision contexts.

The trade-off extends beyond physical settings to encompass participant selection, measurement approaches, and intervention implementation. Homogeneous samples enhance internal validity by reducing between-participant variability that might obscure treatment effects, but they limit population validity by excluding the diversity present in real-world target populations. Highly trained research staff implementing standardized protocols with perfect fidelity enhance internal validity but may not reflect the variable implementation quality typical in routine practice settings.

This dilemma becomes particularly acute in applied research fields where findings must inform real-world interventions. Clinical researchers face pressure to demonstrate both that treatments work under optimal conditions (efficacy) and that they work in routine practice settings (effectiveness). Educational researchers must show that interventions produce learning gains in controlled studies while also demonstrating that these gains persist when interventions are implemented by typical teachers in typical classrooms with typical resources.

The temporal dimension of this trade-off adds another layer of complexity. Research conducted under highly controlled conditions may reveal effects that emerge slowly or require sustained implementation to manifest. However, the artificial support structures present in research settings may not exist in real-world implementations, potentially undermining the long-term sustainability of observed effects.

Balancing Act in Research Design

Successful navigation of the internal-external validity tension requires strategic thinking about research design choices and their implications for different types of validity. Rather than viewing this as a zero-sum trade-off, researchers can employ several strategies to optimize both forms of validity within the constraints of their resources and research questions.

Sequential approaches represent one of the most effective strategies for addressing both validity concerns across a program of research. This approach typically begins with tightly controlled studies that establish internal validity by demonstrating clear causal relationships under optimal conditions. Subsequent studies then systematically relax experimental controls to test whether effects persist under increasingly realistic conditions. This progression from laboratory studies to field studies to implementation studies allows researchers to build cumulative evidence that addresses both causal mechanisms and real-world applicability.

The pharmaceutical industry provides a clear model of sequential validity testing through its phased approach to clinical trials. Phase I trials prioritize internal validity by testing interventions under highly controlled conditions with carefully selected participants. Phase II trials begin to address external validity by testing effectiveness in more diverse populations and realistic treatment settings. Phase III trials further enhance external validity by testing interventions across multiple sites with broader inclusion criteria. Phase IV post-market surveillance studies continue to monitor external validity as interventions are implemented in routine practice.

Multi-site studies offer another approach to balancing validity concerns within single research projects. By conducting identical or similar studies across multiple locations, research teams can test whether effects replicate across different populations, settings, and implementation contexts while maintaining sufficient experimental control to support causal inferences. Multi-site designs allow researchers to examine both the consistency of effects (supporting generalizability) and the factors that moderate effects across sites (identifying boundary conditions for external validity).

Hybrid designs attempt to optimize both forms of validity within single studies through creative methodological approaches. Cluster randomized trials randomize entire groups or organizations rather than individuals, allowing for more naturalistic implementation while maintaining experimental control. Stepped wedge designs introduce interventions sequentially across multiple sites, providing both within-site and between-site comparisons while allowing all sites to eventually receive the intervention.

Pragmatic trials explicitly prioritize external validity by testing interventions under conditions that closely mirror routine practice. These studies sacrifice some internal validity by allowing greater variation in implementation, including diverse participants with multiple comorbidities, and permitting flexible adaptation of interventions to local contexts. The PRECIS-2 tool helps researchers design studies along a continuum from explanatory (internal validity focused) to pragmatic (external validity focused) approaches.

Mixed-methods approaches can address different aspects of validity through complementary methodological strategies. Quantitative experimental components can establish causal relationships and effect sizes while qualitative components explore mechanisms, contextual factors, and implementation processes that influence external validity. Process evaluations embedded within randomized trials can identify factors that facilitate or impede successful implementation, informing both the interpretation of trial results and future implementation efforts.

Contextual Considerations

The optimal balance between internal and external validity depends heavily on the research context, including the stage of knowledge development, the intended application of findings, and the specific research questions being addressed. Different phases of scientific inquiry may appropriately emphasize different forms of validity based on the current state of knowledge and the most pressing research needs.

Early-stage research and basic mechanism studies often appropriately prioritize internal validity over external validity. When fundamental questions about causal relationships remain unresolved, establishing clear evidence for causation under controlled conditions takes precedence over demonstrating generalizability. Basic research investigating cognitive processes, neural mechanisms, or fundamental social phenomena may legitimately focus on internal validity, with external validity addressed in subsequent research phases.

Discovery-oriented research exploring new phenomena or testing novel theoretical predictions may also emphasize internal validity to establish proof-of-concept before investing resources in generalizability studies. Laboratory studies that reveal new learning mechanisms or demonstrate previously unknown treatment effects provide valuable scientific knowledge even if their immediate external validity is limited.

Applied research with immediate policy implications typically requires stronger emphasis on external validity, even at some cost to internal validity. When research findings will directly inform policy decisions, program implementations, or clinical practice guidelines, demonstrating effectiveness under realistic conditions becomes paramount. Policy-relevant research must address questions about scalability, sustainability, and implementation feasibility that may be difficult to answer in highly controlled laboratory studies.

Healthcare research exemplifies this emphasis on external validity for applied questions. While internally valid efficacy studies establish that treatments can work under optimal conditions, healthcare decision-makers need effectiveness studies that demonstrate whether treatments do work under routine practice conditions. The growing emphasis on comparative effectiveness research and real-world evidence reflects recognition that external validity considerations are crucial for healthcare applications.

Translational research occupying the space between basic and applied research must carefully balance both validity concerns. Translational studies attempt to bridge laboratory findings and clinical applications, requiring sufficient internal validity to understand causal mechanisms while maintaining enough external validity to inform real-world applications. This research phase often employs sequential or hybrid approaches that systematically address both validity concerns.

Crisis or emergency contexts may necessitate accepting lower internal validity to achieve timely answers with adequate external validity. During public health emergencies, natural disasters, or other urgent situations, researchers may need to conduct studies under less-than-ideal conditions to provide actionable information for immediate decision-making. The COVID-19 pandemic illustrated how external validity considerations sometimes outweigh internal validity concerns when rapid implementation of interventions is necessary.

The intended audience and end-users of research also influence optimal validity balance. Research intended for scientific audiences may appropriately emphasize internal validity to contribute to theoretical understanding, while research intended for practitioners or policymakers may need to prioritize external validity to support implementation decisions. Regulatory agencies may require different types of evidence than academic journals, leading to different optimal balances between validity types.

Resource constraints and practical limitations often force researchers to make pragmatic decisions about validity trade-offs. Limited funding, time constraints, or restricted access to populations may prevent researchers from achieving optimal balance between internal and external validity. In these situations, researchers must make transparent decisions about which validity concerns to prioritize while acknowledging the limitations of their choices and planning future research to address neglected validity concerns.

Implications for Research Interpretation

For Researchers

Researchers bear the primary responsibility for acknowledging validity limitations transparently and designing studies that appropriately balance different validity concerns based on their research objectives and contexts. This responsibility encompasses not only the technical aspects of study design but also the ethical obligation to communicate findings in ways that accurately represent their scope and limitations.

Acknowledging limitations in published studies requires researchers to move beyond perfunctory limitations sections toward thoughtful analysis of how validity concerns affect the interpretation and application of findings. Limitations require a critical, overall appraisal and interpretation of their impact, addressing whether identified problems with methods, validity, or generalizability matter and to what extent. Effective limitations discussions should specifically address both internal and external validity concerns, helping readers understand the conditions under which findings are most and least likely to hold.

Rather than simply listing potential limitations, researchers should prioritize those that most significantly affect the interpretation of results. A laboratory study with excellent internal validity should acknowledge specific external validity limitations—particular populations, settings, or conditions to which findings may not generalize. Conversely, field studies with strong external validity should address potential internal validity threats that might limit confidence in causal inferences.

Transparency about validity limitations also involves providing sufficient detail about study methods, participants, settings, and procedures to allow readers to assess generalizability to their own contexts. Methods sections should explicitly discuss the validity of assessment tools, particularly when researchers modify previously studied instruments, use them in different settings or with different populations, or apply different interpretation criteria.

Designing follow-up studies to address validity concerns represents a proactive approach to building cumulative evidence that addresses multiple validity domains. Researchers should view individual studies as components of larger research programs that systematically address different aspects of validity across multiple investigations. This programmatic approach allows researchers to optimize specific types of validity in individual studies while building overall evidence that addresses both causal mechanisms and real-world applications.

Sequential study designs can systematically progress from internally valid laboratory studies to externally valid field studies, with each phase informing the design of subsequent investigations. Researchers might begin with tightly controlled studies that establish causal relationships and identify key mechanisms, followed by studies that test these mechanisms under increasingly realistic conditions. This progression allows researchers to maintain confidence in causal inferences while systematically addressing external validity concerns.

Collaborative approaches that bring together researchers with different methodological strengths can address validity concerns more comprehensively than individual research efforts. Laboratory-based researchers can partner with field-based colleagues to design coordinated studies that address complementary validity questions. Implementation scientists can work with efficacy researchers to design studies that bridge the gap between controlled trials and routine practice.

Building systematic research programs requires long-term thinking about how individual studies contribute to cumulative knowledge that addresses multiple validity domains. Rather than conducting isolated studies, researchers should develop research programs that systematically address different populations, settings, time periods, and implementation approaches. This approach recognizes that no single study can adequately address all validity concerns and that scientific progress requires coordinated efforts across multiple investigations.

Systematic research programs should include explicit plans for replication across different contexts, populations, and settings. Rather than viewing replication as merely confirmatory, researchers should design replication studies that extend external validity by testing boundary conditions and moderating factors. Cross-cultural replications, studies across different age groups, and investigations in varied organizational contexts can reveal whether core findings represent universal principles or context-specific phenomena.

For Practitioners and Policymakers

Practitioners and policymakers face the challenging task of making decisions based on research evidence while navigating the uncertainties and limitations inherent in any scientific study. Evidence-based practice requires the conscientious and judicious use of current best evidence in conjunction with clinical expertise and patient values, recognizing that research findings must be interpreted within specific practice contexts.

Critical evaluation of research evidence requires practitioners and policymakers to develop skills in assessing both the quality of individual studies and the cumulative strength of evidence across multiple investigations. This evaluation should consider not only whether studies demonstrate statistically significant effects but also whether the conditions under which effects were demonstrated match the conditions of intended implementation.

Internal validity assessment involves examining whether studies provide convincing evidence for causal relationships. Practitioners should look for evidence of appropriate control groups, random assignment, and procedures to minimize bias and confounding. However, internal validity alone is insufficient for practice decisions—effects demonstrated under highly controlled conditions may not persist under the variable conditions typical of routine practice.

External validity assessment requires practitioners to evaluate whether study participants, settings, and implementation approaches match their own contexts sufficiently to justify applying research findings. Due to failure to measure external validity, practitioners are often unable to determine if a given study’s findings apply to their local setting, population, staffing, or resources. Practitioners must consider whether their patients, students, or clients resemble study participants in characteristics that might moderate intervention effects.

Understanding the limits of generalization involves recognizing that research findings represent probabilities rather than certainties and that effects may vary across different implementation contexts. Even well-designed studies with strong validity provide evidence about what is likely to work under certain conditions rather than guarantees about what will work in all situations.

Practitioners should be particularly cautious about generalizing from studies conducted with highly selected populations to more diverse practice populations. Research participants who volunteer for studies, meet strict inclusion criteria, and complete study protocols may differ systematically from typical practice populations in motivation, adherence, or other characteristics that influence treatment outcomes.

Similarly, interventions implemented by highly trained research staff with extensive resources and support may produce different effects than those implemented under typical practice constraints. Practitioners should look for evidence about implementation requirements, including training needs, resource requirements, and organizational supports necessary for successful implementation.

Making informed decisions despite uncertainty requires practitioners and policymakers to balance research evidence with other considerations including resource constraints, competing priorities, and stakeholder preferences. Evidence-based policymaking involves using formal, explicit methods to analyze evidence and make it available to decision makers, but it also requires recognition that evidence is only one input into complex decision-making processes.

When research evidence has limited external validity for specific practice contexts, practitioners may need to implement interventions on a pilot basis with careful monitoring and evaluation. This approach allows practitioners to test whether research findings generalize to their specific contexts while minimizing risks associated with full-scale implementation of unproven interventions.

Practitioners should also seek evidence from multiple sources rather than relying on single studies. Systematic reviews and meta-analyses can provide broader perspectives on intervention effectiveness across diverse contexts, helping practitioners understand the range of expected effects and the factors that moderate intervention success.

For Research Consumers

Research consumers—including journalists, advocacy organizations, and members of the public—play crucial roles in interpreting and disseminating research findings. However, research consumers often lack specialized training in research methodology, making them vulnerable to misinterpreting study findings or overgeneralizing results beyond their appropriate scope.

Red flags when reading research studies can help research consumers identify potential validity concerns that might limit the applicability of findings. Studies with extremely small sample sizes may lack sufficient power to detect effects reliably or may have limited generalizability to broader populations. Research conducted with highly unusual populations or under artificial conditions should raise questions about external validity.

Claims about causation based on correlational or observational studies represent another common red flag. While such studies can provide valuable information about associations between variables, they cannot establish causal relationships with the same confidence as well-designed experimental studies. Research consumers should be skeptical of causal claims that are not supported by appropriate experimental evidence.

Overgeneralization of findings represents a particularly common problem in research communication. Studies conducted with specific populations (such as college students) may be presented as if they apply to all adults. Research conducted in particular countries or cultures may be presented as if findings are universal. Research consumers should look for clear descriptions of study populations and settings to assess the appropriability of generalizing findings to other contexts.

Questions to ask about study design and conclusions can help research consumers evaluate the quality and applicability of research findings. Key questions include: Who participated in this study and do they resemble the populations to whom findings are being applied? Where was the study conducted and do these settings match the contexts where findings will be implemented? How were participants assigned to different conditions and were appropriate controls used to minimize bias?

Research consumers should also ask about the magnitude and practical significance of effects, not just their statistical significance. Small effects that reach statistical significance in large studies may have limited practical importance. Effect sizes and confidence intervals provide more informative measures of the magnitude and precision of effects than p-values alone.

Questions about funding sources and potential conflicts of interest can also inform the evaluation of research findings. While funding sources do not automatically invalidate research findings, they can create incentives that influence study design, data interpretation, or reporting practices in ways that might bias results.

The importance of looking at multiple studies cannot be overstated in research evaluation. Single studies, regardless of their quality, provide limited evidence for broad conclusions about intervention effectiveness or causal relationships. Research consumers should seek evidence from multiple independent studies conducted by different research teams in different contexts before drawing strong conclusions about research findings.

Systematic reviews and meta-analyses provide valuable resources for research consumers by synthesizing evidence across multiple studies and identifying patterns of effects across different contexts and populations. However, research consumers should also evaluate the quality of systematic reviews, including the comprehensiveness of literature searches, the quality of included studies, and the appropriateness of statistical analyses.

The persistence of barriers to evidence use in policy despite decades of research highlights the complexity of translating research findings into practice. Research consumers should maintain realistic expectations about the time required for research findings to influence policy and practice and should recognize that implementation often requires adaptation of interventions to local contexts.

Media coverage of research findings often oversimplifies complex validity considerations, presenting findings as more definitive or broadly applicable than the underlying research supports. Research consumers should seek original research reports or systematic reviews rather than relying solely on media summaries when making important decisions based on research evidence.

The digital age has made research findings more accessible to general audiences, but it has also increased exposure to low-quality or misleading research. Research consumers should prioritize findings published in peer-reviewed journals and should be skeptical of research claims that seem too good to be true or that contradict well-established scientific consensus without compelling evidence.

FAQs

What is an example of internal validity?

An example of internal validity is a randomized controlled trial (RCT) testing a new drug’s effect on blood pressure. By randomly assigning participants to a treatment or placebo group, controlling for diet, exercise, and other variables, and using double-blind procedures, the study ensures that any observed changes in blood pressure are caused by the drug, not external factors like participant expectations or lifestyle differences.

What is an example of external validity?

An example of external validity is when findings from the same RCT are applied to a broader population. If the trial was conducted only on healthy adults aged 18–40, but the drug is later prescribed to elderly patients with comorbidities, external validity is high if the drug still effectively lowers blood pressure in this wider group, reflecting real-world applicability.

What are the three types of internal validity?

Internal validity is often discussed in terms of specific threats or aspects, but it is not strictly categorized into “three types.” Instead, internal validity encompasses the overall confidence in causal conclusions, supported by factors like:

Control of Confounding Variables: Ensuring extraneous variables (e.g., age, diet) don’t influence the outcome.

Randomization: Randomly assigning participants to groups to eliminate selection bias.

Blinding: Using single- or double-blind procedures to prevent participant or researcher bias from affecting results.

These elements collectively strengthen internal validity by isolating the effect of the independent variable.

What is the difference between internal and external reliability in research?

The terms “internal reliability” and “external reliability” are less common in research methodology compared to internal and external validity, but they can be understood in the context of measurement consistency:

Internal Reliability: Refers to the consistency of a measure within a study. For example, if a questionnaire assessing anxiety produces consistent results across multiple items measuring the same concept (e.g., high Cronbach’s alpha), it has high internal reliability. It ensures the tool is stable and coherent within the study’s context.

External Reliability: Refers to the consistency of a measure across different studies or settings, often called test-retest reliability. For example, if the same anxiety questionnaire yields similar results when administered to different groups or at different times, it has high external reliability, indicating stability beyond the original study.

Key Difference: Internal reliability focuses on consistency within a single study’s measurement tool, while external reliability emphasizes consistency across varied contexts or repeated applications. In contrast, internal and external validity address causal accuracy and generalizability, respectively, not measurement consistency.