In today’s data-driven world, quantitative methods serve as powerful tools for understanding complex phenomena and making informed decisions across diverse fields. These statistical and mathematical approaches transform raw data into meaningful insights, enabling researchers, analysts, and decision-makers to identify patterns, test hypotheses, and predict outcomes with measurable precision.

From healthcare professionals analyzing clinical trial results to financial analysts evaluating market trends, quantitative methods provide the foundation for evidence-based conclusions. These techniques encompass a broad spectrum of approaches, including regression analysis, hypothesis testing, time series analysis, and experimental design. Each method offers unique advantages for specific research questions and data types.

The applications span virtually every industry and academic discipline. Marketing teams use quantitative analysis to segment customers and measure campaign effectiveness, while social scientists employ these methods to study human behavior and policy impacts. This systematic approach to data analysis continues to evolve, incorporating advanced computational techniques that expand the possibilities for discovery and innovation.

What Are Quantitative Methods?

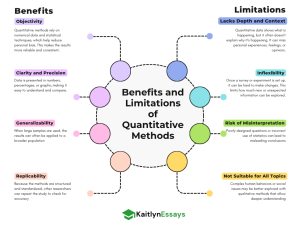

Quantitative methods are systematic approaches to research and analysis that rely on numerical data and statistical techniques to investigate problems, test theories, and measure relationships between variables. Unlike qualitative methods that focus on descriptive observations and interpretations, quantitative methods emphasize objectivity, measurement, and mathematical precision.

At their core, these methods involve collecting numerical data through structured instruments such as surveys, experiments, or databases, then applying statistical procedures to analyze this information. The goal is to quantify phenomena, establish cause-and-effect relationships, and generate findings that can be replicated and generalized to larger populations.

Quantitative methods encompass various statistical techniques ranging from basic descriptive statistics that summarize data characteristics to advanced inferential statistics that help researchers draw conclusions about populations based on sample data. Common approaches include correlation analysis to measure relationships between variables, regression modeling to predict outcomes, and hypothesis testing to determine statistical significance.

These methods are distinguished by their emphasis on numerical precision, standardized procedures, and the ability to produce results that can be expressed in mathematical terms, making them particularly valuable for scientific research and data-driven business decisions.

Common Quantitative Methods

Several fundamental quantitative methods form the backbone of statistical analysis across disciplines. Understanding these core techniques helps researchers and analysts select the most appropriate approach for their specific research questions and data types.

Descriptive Statistics provide the foundation for data analysis by summarizing and describing the basic features of datasets. These methods include measures of central tendency (mean, median, mode), measures of variability (standard deviation, variance, range), and frequency distributions. Descriptive statistics help researchers understand data patterns before conducting more advanced analyses.

Correlation Analysis examines the strength and direction of relationships between two or more variables. Pearson correlation coefficients measure linear relationships, while Spearman correlation handles non-linear associations. This method is essential for identifying potential connections between variables without implying causation.

Regression Analysis goes beyond correlation to model relationships between dependent and independent variables. Linear regression predicts outcomes based on one or more predictor variables, while multiple regression handles complex relationships involving several factors. Logistic regression is used when the outcome variable is categorical.

Hypothesis Testing provides a systematic framework for making statistical inferences about populations based on sample data. Common tests include t-tests for comparing means, chi-square tests for categorical data relationships, and ANOVA for comparing multiple groups. These methods help determine whether observed differences are statistically significant or due to random chance.

Time Series Analysis specializes in analyzing data collected over time to identify trends, seasonal patterns, and forecast future values. This method is crucial for financial modeling, demand forecasting, and monitoring performance indicators over extended periods.

Real-World Applications

Quantitative methods have transformed decision-making processes across numerous industries and sectors, providing evidence-based insights that drive strategic planning and operational efficiency.

Business

In the business world, quantitative methods serve as essential tools for competitive advantage and strategic planning. Market research relies heavily on survey data analysis, consumer behavior modeling, and statistical sampling to understand customer preferences and market trends. Companies use regression analysis to identify factors that influence sales performance, while correlation studies help determine relationships between marketing spend and revenue growth.

Financial forecasting represents another critical application, where time series analysis helps predict future cash flows, stock prices, and economic indicators. Investment firms use quantitative models to assess portfolio risk, optimize asset allocation, and develop algorithmic trading strategies. Retail businesses employ statistical methods to manage inventory levels, predict seasonal demand fluctuations, and optimize pricing strategies.

Quality control processes in manufacturing rely on statistical process control charts and hypothesis testing to maintain product standards and identify production anomalies. Human resources departments use quantitative analysis to evaluate employee performance metrics, predict turnover rates, and assess the effectiveness of training programs. Marketing teams leverage A/B testing and experimental design to optimize campaign performance and measure customer acquisition costs.

Example: E-commerce Customer Retention Analysis

Consider an online retailer facing declining customer retention rates. The company decides to use quantitative methods to identify factors influencing customer loyalty and develop targeted retention strategies.

Data Collection: The company gathers numerical data from multiple sources including customer purchase history, website behavior metrics, demographic information, customer service interactions, and survey responses. This dataset includes variables such as total spending, purchase frequency, time between purchases, website session duration, customer age, and satisfaction scores.

Descriptive Analysis: Initial analysis reveals that the average customer makes 3.2 purchases per year with a mean order value of $85. The data shows 35% of customers haven’t made a purchase in the last six months, indicating potential churn risk.

Correlation Analysis: Statistical analysis identifies strong positive correlations between customer lifetime value and factors like purchase frequency (r = 0.78), customer service satisfaction (r = 0.65), and email engagement rates (r = 0.52). Conversely, shipping costs show a negative correlation with retention (r = -0.43).

Regression Modeling: A multiple regression model is developed to predict customer lifetime value based on key variables. The model reveals that a one-unit increase in monthly purchase frequency increases lifetime value by $127, while each additional day between purchases decreases value by $3.

Hypothesis Testing: A/B testing compares two customer retention strategies. Group A receives personalized product recommendations, while Group B gets standard promotional emails. After three months, t-test results show Group A has significantly higher retention rates (68% vs. 52%, p < 0.01).

Business Impact: Based on these findings, the company implements personalized recommendation systems, reduces shipping costs for frequent customers, and improves customer service processes. Six months later, customer retention increases by 23%, and average lifetime value grows by $156 per customer.

Healthcare

Healthcare represents one of the most critical domains for quantitative methods, where statistical analysis directly impacts patient outcomes, treatment decisions, and public health policies. The rigorous application of these methods ensures evidence-based medicine and helps healthcare professionals make informed decisions about patient care.

Clinical trials form the cornerstone of medical research, utilizing randomized controlled trials (RCTs) to test new treatments and medications. These studies employ careful experimental design, statistical power calculations to determine appropriate sample sizes, and sophisticated statistical analyses to evaluate treatment efficacy and safety. Researchers use hypothesis testing to determine whether observed treatment effects are statistically significant, while confidence intervals provide ranges of plausible treatment benefits.

Epidemiological studies leverage quantitative methods to understand disease patterns, identify risk factors, and track public health trends. Cohort studies follow large populations over time to establish causal relationships between exposures and health outcomes, while case-control studies compare diseased and healthy individuals to identify potential risk factors. Meta-analyses combine results from multiple studies using statistical techniques to provide more robust evidence for medical decision-making.

Diagnostic accuracy studies use sensitivity and specificity measures to evaluate medical tests and screening procedures. Predictive modeling helps identify patients at high risk for specific conditions, enabling early intervention and preventive care. Quality improvement initiatives in hospitals rely on statistical process control to monitor patient safety indicators, infection rates, and treatment outcomes.

Health economics applications include cost-effectiveness analyses that compare different treatments using quantitative measures like quality-adjusted life years (QALYs) and incremental cost-effectiveness ratios. Population health surveillance systems use time series analysis to detect disease outbreaks and monitor vaccination effectiveness across communities.

Example: COVID-19 Vaccine Effectiveness Study

During the pandemic, health authorities conducted a large-scale observational study to evaluate COVID-19 vaccine effectiveness in preventing severe disease outcomes among adults aged 65 and older.

Study Design: Researchers implemented a matched case-control study involving 50,000 participants across multiple healthcare systems. Cases were hospitalized patients with confirmed COVID-19, while controls were matched individuals without hospitalization, controlling for age, gender, underlying health conditions, and geographic location.

Data Collection: The study gathered comprehensive data including vaccination status, vaccine type, timing of doses, demographic characteristics, comorbidities, hospitalization dates, disease severity scores, and clinical outcomes. Laboratory data included viral load measurements and antibody levels.

Statistical Analysis: Logistic regression models calculated odds ratios to determine vaccine effectiveness, adjusting for confounding variables such as age, diabetes status, and immunocompromising conditions. The analysis revealed that fully vaccinated individuals had 89% lower odds of hospitalization compared to unvaccinated individuals (OR = 0.11, 95% CI: 0.08-0.15, p < 0.001).

Survival Analysis: Kaplan-Meier curves demonstrated significant differences in time to severe outcomes between vaccinated and unvaccinated groups. Cox proportional hazards modeling showed that vaccination reduced the hazard of ICU admission by 92% (HR = 0.08, 95% CI: 0.05-0.13).

Subgroup Analysis: Stratified analyses examined vaccine effectiveness across different demographics and risk groups. Results showed effectiveness rates of 94% in healthy adults, 85% in individuals with diabetes, and 78% in immunocompromised patients.

Time Series Component: Monthly effectiveness estimates revealed declining protection over time, dropping from 95% effectiveness at 2 months post-vaccination to 78% at 6 months, supporting recommendations for booster doses.

Public Health Impact: These quantitative findings directly informed national vaccination policies, booster shot recommendations, and risk stratification guidelines. The statistical evidence supported expanding booster eligibility to high-risk populations and influenced international vaccination strategies, potentially preventing thousands of hospitalizations and deaths.

Education

Educational research and policy development rely extensively on quantitative methods to assess student performance, evaluate teaching effectiveness, and inform curriculum decisions. These statistical approaches help educators and policymakers make data-driven decisions that can improve learning outcomes for students at all levels.

Standardized testing analysis represents a fundamental application where psychometric methods ensure test reliability and validity. Item response theory (IRT) models help calibrate test questions and establish appropriate difficulty levels, while classical test theory provides measures of internal consistency and test-retest reliability. Large-scale assessments use equating procedures to maintain consistent scoring standards across different test versions and administration periods.

Educational intervention studies employ experimental and quasi-experimental designs to evaluate program effectiveness. Randomized controlled trials compare different teaching methods or educational technologies, while regression discontinuity designs assess the impact of policy changes such as class size reduction or funding increases. Multilevel modeling accounts for the hierarchical structure of educational data, where students are nested within classrooms, schools, and districts.

Achievement gap analysis uses descriptive and inferential statistics to identify disparities in academic performance across demographic groups. Longitudinal studies track student progress over time, employing growth curve modeling to understand learning trajectories and identify factors that promote or hinder academic success. Value-added models attempt to isolate teacher effects on student achievement by controlling for prior performance and student characteristics.

Assessment data mining techniques analyze large educational datasets to identify patterns in student behavior, predict academic risk, and personalize learning experiences. Factor analysis helps researchers understand the underlying constructs measured by educational assessments, while cluster analysis groups students with similar learning profiles for targeted interventions.

Example: State-Wide Mathematics Achievement Gap Analysis

A state education department conducted a comprehensive analysis to understand factors contributing to mathematics achievement gaps between different student populations and evaluate the effectiveness of targeted intervention programs.

Study Scope: The analysis included 150,000 students across 800 schools, examining three years of standardized test data (grades 3-8) along with demographic information, school characteristics, and participation in remedial programs.

Data Collection: Researchers compiled student-level data including annual mathematics test scores, prior academic performance, socioeconomic status, English language learner status, special education classification, attendance rates, and teacher qualifications. School-level variables included per-pupil spending, class sizes, and availability of advanced mathematics courses.

Descriptive Analysis: Initial analysis revealed significant achievement gaps, with low-income students scoring an average of 0.8 standard deviations below their peers, while English language learners showed gaps of 0.6 standard deviations. The data indicated that only 32% of low-income students met proficiency standards compared to 78% of higher-income students.

Multilevel Modeling: Hierarchical linear models separated variance in achievement attributable to student, classroom, and school factors. Results showed that 65% of achievement variance occurred within schools, 25% between schools, and 10% between districts. Student-level factors explained 45% of the achievement gap, while school-level factors accounted for 30%.

Longitudinal Growth Analysis: Growth curve modeling tracked student progress over three years, revealing that achievement gaps widened over time. Low-income students showed annual growth rates of 0.3 standard deviations compared to 0.5 for higher-income peers, suggesting cumulative disadvantage effects.

Intervention Evaluation: Propensity score matching compared students participating in intensive mathematics support programs with similar non-participants. After controlling for selection bias, participants showed significant improvement (effect size = 0.4), with the largest gains among students who received at least 60 hours of additional instruction.

Regression Analysis: Multiple regression identified key predictors of mathematics achievement including prior performance (β = 0.72), attendance rate (β = 0.18), teacher experience (β = 0.12), and class size (β = -0.08). The model explained 68% of variance in student outcomes.

Policy Recommendations: Statistical findings led to evidence-based policy changes including expanded access to intensive mathematics support, reduced class sizes in high-need schools, and professional development focused on culturally responsive teaching practices. Follow-up analysis showed a 15% reduction in achievement gaps two years after implementation.

Government and Public Policy

Government agencies and policymakers rely heavily on quantitative methods to understand societal trends, allocate resources effectively, and evaluate the impact of public programs. These statistical approaches provide the empirical foundation for policy decisions that affect millions of citizens and guide the distribution of public resources.

Census data analysis represents one of the most comprehensive applications of quantitative methods in government. Demographic analysis techniques help track population changes, migration patterns, and socioeconomic trends that inform congressional redistricting, federal funding formulas, and long-term planning initiatives. Survey sampling methods ensure representative data collection when full population counts are impractical, while statistical adjustment procedures address potential undercounts in hard-to-reach populations.

Policy evaluation studies employ experimental and quasi-experimental designs to assess program effectiveness and return on investment. Difference-in-differences analysis compares outcomes before and after policy implementation across treated and control groups, while regression discontinuity designs evaluate programs with eligibility thresholds. Cost-benefit analyses quantify program impacts in monetary terms, helping policymakers prioritize competing initiatives.

Economic forecasting uses time series analysis and econometric modeling to predict government revenue, unemployment rates, and economic growth. These projections inform budget planning, monetary policy decisions, and fiscal stimulus measures. Social indicators research tracks quality of life measures, poverty rates, and inequality metrics to monitor societal well-being and identify areas requiring intervention.

Administrative data analysis leverages large government databases to understand service delivery patterns, identify fraud, and optimize operations. Predictive modeling helps agencies anticipate demand for services, while network analysis reveals relationships in complex systems such as transportation infrastructure or social service delivery networks.

Example: Urban Housing Policy Impact Assessment

A metropolitan government conducted a comprehensive quantitative analysis to evaluate the effectiveness of its affordable housing initiative and inform future policy decisions.

Program Overview: The city implemented a mixed-income housing development program over five years, investing $250 million to create 8,000 affordable units across 15 neighborhoods. The analysis aimed to assess impacts on housing affordability, neighborhood stability, and resident outcomes.

Data Integration: Researchers compiled data from multiple government sources including housing authority records, property tax assessments, crime statistics, school enrollment data, business licensing records, and American Community Survey estimates. Individual-level data tracked 12,000 program participants and 25,000 comparison residents over seven years.

Spatial Analysis: Geographic information systems (GIS) analysis mapped housing unit locations and measured proximity to public transportation, schools, and employment centers. Spatial autocorrelation analysis identified clustering patterns in property values and demographic changes, while hot spot analysis detected areas of concentrated development impact.

Difference-in-Differences Design: The study compared changes in neighborhood outcomes between areas that received affordable housing investments and similar areas that did not. Pre-treatment data established baseline conditions, while post-treatment measurements assessed program impacts. Results showed that treated neighborhoods experienced 12% increases in median household income compared to 3% in control areas.

Propensity Score Matching: Individual-level analysis matched program participants with similar non-participants based on demographic characteristics, prior housing history, and neighborhood conditions. Matched comparisons revealed that program participants had 23% lower rates of housing instability and 18% higher rates of employment retention compared to the control group.

Time Series Analysis: Monthly crime data analysis used interrupted time series methods to assess safety impacts. Results indicated significant reductions in property crime rates (declining by 15% annually) in neighborhoods with new mixed-income developments, while violent crime showed no significant change.

Economic Impact Assessment: Input-output modeling estimated the program’s economic multiplier effects, showing that every dollar of housing investment generated $1.65 in local economic activity. Construction employment increased by 1,200 jobs annually, while new resident spending supported an additional 800 retail and service positions.

Cost-Effectiveness Analysis: The study calculated program costs per outcome achieved, finding that each stable housing placement cost $28,000 over five years, while comparable market-rate housing would cost residents $45,000. Social return on investment analysis estimated $3.20 in social benefits for every dollar invested, including reduced homelessness services, improved health outcomes, and increased tax revenue.

Policy Impact: Quantitative findings supported expansion of the mixed-income housing program, with the city council approving an additional $150 million investment. The analysis informed new site selection criteria prioritizing transit accessibility and demonstrated the need for complementary workforce development programs. Regional housing authorities adopted similar evaluation frameworks, and the methodology influenced federal housing policy discussions.

FAQs

What is the main purpose of using quantitative methods in research?

Quantitative methods are used to collect and analyze numerical data to identify patterns, test relationships, and make predictions. They help researchers measure things in a clear, objective, and structured way, often using statistics.

How are quantitative methods different from qualitative methods?

Quantitative methods focus on numbers, measurements, and statistical analysis. They answer questions like “how many?” or “what percentage?”

Qualitative methods, on the other hand, explore ideas, opinions, and meanings using words, interviews, or open-ended questions. They answer questions like “why?” or “how?”

What are some tools used in quantitative research?

Common tools include surveys with multiple-choice or scale-based questions, experiments with controlled variables, and software like Excel, SPSS, or R for data analysis. These tools help organize, calculate, and visualize numerical data.